Capella Space

Blog

March 26, 2019

If you are developing deep learning applications with SAR data we’d love to hear from you. In the coming months we’ll be rolling out high-resolution, high revisit SAR training data sets for a variety of commercial and government use cases. If you are working on object detection, change detection, and other deep learning applications with SAR data, contact us to share your work and the challenges you are facing. Let’s get the conversation started.

Above: High resolution (25cm) SAR data from F-SAR, a DLR airborne system. Image courtesy of DLR, the German Aerospace Center

Introduction

While it seems like the hype cycle for deep learning is dying down a bit (neural network AI is simple!), and the resurgence of neural networks and computer vision is becoming the norm, in the past five years many useful applications of these technologies have emerged in the domain of remote sensing. Object detection and land cover classification seem to have been the most researched and commercialized applications of deep learning in remote sensing, but there are a number of other areas that have also benefited, like data fusion, 3D reconstruction, and image co-registration. The ever broadening use of deep learning in remote sensing is due to two trends: 1) Ubiquitous, easy to use cloud computing infrastructure including GPUs; 2) the development and increased adoption of easy to use machine learning tooling like Google’s Tensorflow, AWS SageMaker, and many other open source frameworks; and 3) an expanding ecosystem of services for creating labeled training data (Scale, Figure Eight) as well as open labeled datasets like SpaceNet on AWS.

Deep learning, neural networks, and computer vision have also been used more frequently in recent years with SAR data. Most of the leading satellite earth observation analytics companies, like Orbital Insight, Descartes Labs, and Ursa have been expanding the use of SAR data in their analytical workflows. From what I’ve seen, the areas that have benefited most from the use of these techniques have been: object detection (automated target recognition), land cover classification, change detection, and data augmentation. It also appears that there is some recent investigation into how the application of deep learning can benefit interferometric SAR analysis. However, there are some challenges in the use of deep learning with SAR data. Among other things, there is a distinct lack of large labelled training data sets and because SAR data has speckle noise and is slightly less intuitive than optical data, it can be a challenge for human labelers and models to correctly classify features.

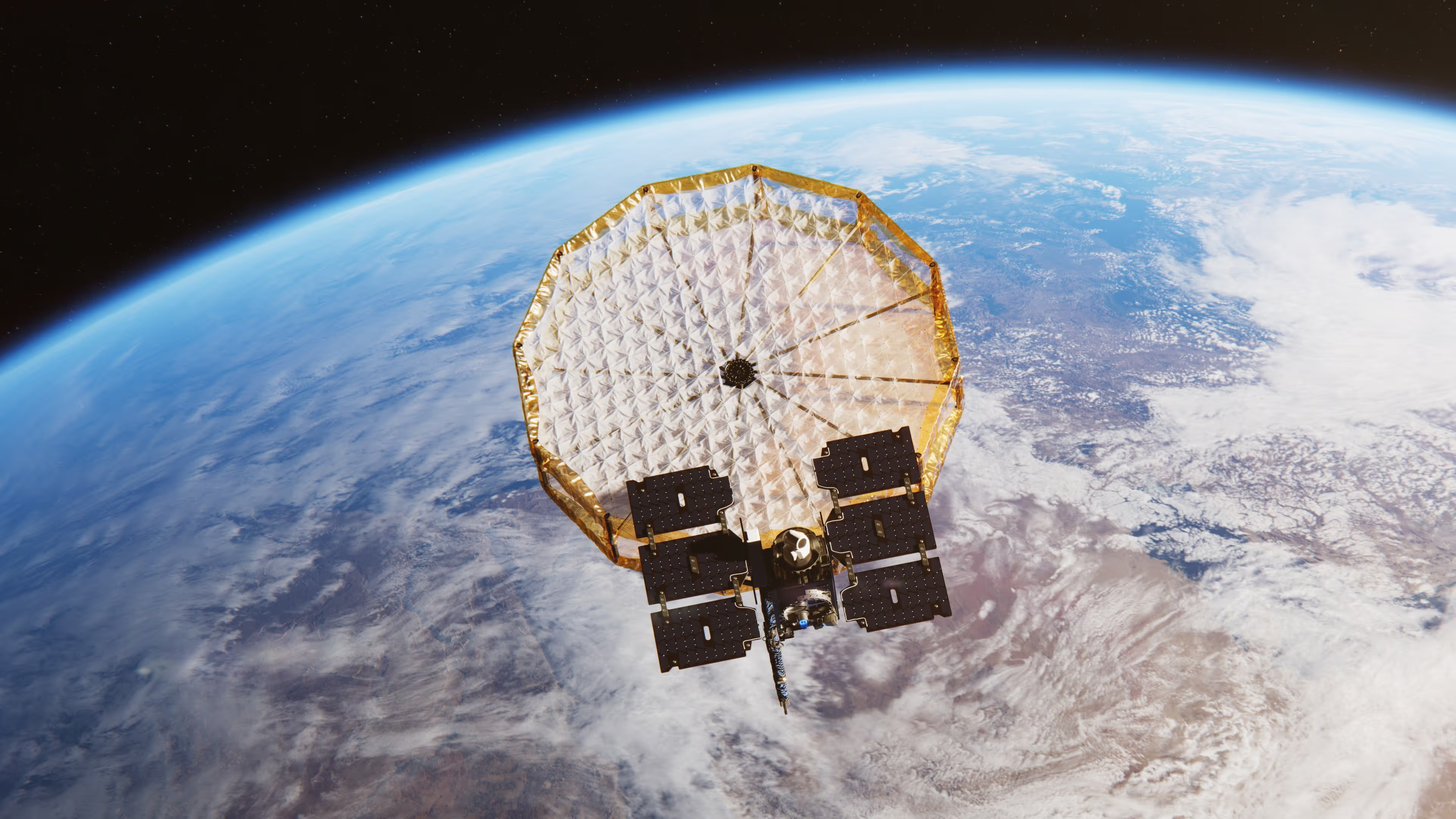

In this blog, I’ll highlight some of the applications that would benefit from the use of deep learning and high-revisit, high-resolution SAR data, like that from the Capella constellation. I’ll also outline some of the challenges that we are hoping to help tackle with the broader SAR user community.

Object Detection

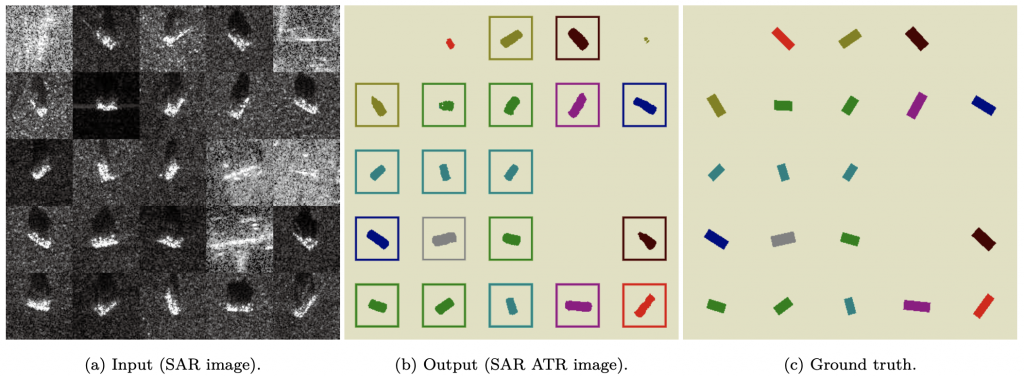

Automated target recognition with a CNN and the MSTAR dataset.

Most of the research and development in SAR deep learning applications has been toward object detection and land cover classification. In the world of SAR, object detection is commonly called automatic target recognition (ATR). Research in ATR gained momentum in the 90’s and originated from military applications, but has since expanded into a broad array of civilian commercial use cases. A spectrum of ATR problems have been explored in the literature ranging from finding a well-understood target in known terrain and clutter, to identifying targets that may have distinctly different SAR response depending on view angle and occlusion by other targets. Generally, the problem involves finding relatively small targets (vehicles, ships, electricity infrastructure, oil and gas infrastructure, etc.) in a large scene dominated by clutter.

Recently, the use of convolutional neural networks (CNN) have improved performance of object recognition models for a variety of targets. The first appearance of CNNs in SAR ATR seems to be as recent as 2015, where it was demonstrated that the use of CNNs was competitive with other methods considered state of the art at the time. Others have since expanded on this work and have demonstrated >99% classification accuracy for features in the MSTAR data set, which consists of military vehicles. More civilian and commercial examples of the use of CNNs have cropped up in recent years, including a project to map the power grid, a Kaggle competition to distinguish ships and icebergs, and some research into identifying floating oil rigs. Most of the work that I’ve come across so far focuses on radar backscatter images, but a number of papers have highlighted the potential of using phase data for additional target information.

Land Cover Classification

Classifying sea ice depth from Sentinel-1 data using a CNN.

Classification of larger features and land cover has also benefited from the application of deep learning approaches and weather-independent, reliable SAR monitoring. While the use of neural networks for SAR data classification is not new, it seems that the use of deep learning for land cover classification has greatly increased since 2015, when fully convolutional neural networks were introduced. There has been a lot of research exploring the feasibility of using CNNs to classify common land cover, like roads, buildings, flood water, urban areas, and crops. Deep learning has also been used for some interesting atypical land cover (or water cover) applications like identifying oil spills and classifying varying thickness of sea ice. Typically, the use of deep learning outperforms classical approaches, though it may not be more efficient in time and compute cost. As with all supervised learning techniques though, the performance is highly dependant on the quality of the labeled training data.

Change Detection

Detecting urban change in UAVSAR data using stacked autoencoders.

High resolution, high cadence SAR data is uniquely suited to change detection applications due to the ability to see through clouds and capture both changes in the amplitude of reflected energy as well as phase coherence. I was surprised to find that there had been so much research into the use of deep neural networks for change detection in SAR data. Unlike object detection, where the use of CNNs is dominant, there have been a variety of neural network methods used to tackle the problem of identifying surface changes in SAR data. Generally, the approaches either rely on classifying land cover in a multitemporal stack and then comparing post-classification results, or classifying the radiometric or phase differences between multi-temporal data. There are examples of the latter approach using restricted boltzmann machines, PCANet, stacked autoencoders and multilayer perceptrons, supervised contractive autoencoders (sCAE) and clustering, sCAEs and fuzzy c-means, and CNNs. There is another interesting category of approaches that use both SAR and optical imagery as input and use a deep convolutional coupling network to identify change in the heterogeneous data. Most of the research concludes that deep learning approaches show better performance (lower false positive and false negative rates) than previous methods using Markov random fields and principal components analysis.

Deformation Monitoring and InSAR

It seems that there hasn’t been much research to date in the use of deep learning methods to analyse interferograms or support InSAR processing. However, there is evidence of interest in this area, with some early research into identifying deformation patterns in wrapped phase using CNNs and funded projects integrating deep learning methods in InSAR processing chains. There has also been some more broadly applicable work in applying deep learning methods to phase unwrapping. It’s likely that we will see more published research in the coming years on these topics as there are open PhD positions at various institutions with a focus on applying machine learning to InSAR workflows.

Data Augmentation

Generating High Quality Visible Images from SAR Images Using a GAN.

There has been some fascinating research applying CNNs and generative adversarial networks (GANs) to applications in SAR data enhancement, and data fusion. One useful area of research, which also benefits object detection, is the use of CNNs to estimate and reduce speckle noise in SAR amplitude data. The result is a “clean” SAR image produced through a single feedforward process. It is also possible to improve the apparent resolution of SAR data using GANs. In the literature, there are examples of image translation from Sentinel-1 resolution to TerraSAR-X resolution. While these methods don’t appear to preserve feature structure very well, they are an interesting view into the future of super resolution and style transfer for SAR. It’s also possible to use GANs to make high resolution SAR data look more like optical imagery through despeckling and colorization, which could aid in visual interpretation of SAR imagery. There are new data sets now available that will help advance this type of work and other applications requiring SAR and optical data fusion.

Challenges

As I was reviewing the literature on these applications, a common challenge was evident: there is a dearth of high quality labeled SAR training data, particularly at high resolution. As with all supervised learning methods, the performance of the model and results are highly dependent on the training data inputs. The most commonly used high quality training data set available is the MSTAR data set, but this only contains a limited number of military features. Other researchers have resorted to creating their own annotated training data sets, but it can be incredibly expensive to assemble a large global data set as there are limited options for high resolution source data, and very few that have open licenses (e.g. UAVSAR). There are ways to enhance training data through simulation and transfer learning, but you still need a reasonable data set as a starting point. I plan to discuss this challenge, SAR training data in general, and Capella’s vision in a future blog post.

Curious Which Of Our Analytics Partners

Can Support Your Mission?

Contact our team to explore the possibilities. Our experts can help

you determine the optimal solutions for your unique needs.

Keywords

Stay Informed. Stay Ready.

Get briefings on new capabilities, technology improvements, and upcoming events.

.avif)